System Resilience: Difference between revisions

m (Text replacement - "SEBoK v. 2.12, released 20 May 2025" to "SEBoK v. 2.12, released 27 May 2025") |

|||

| (38 intermediate revisions by 4 users not shown) | |||

| Line 1: | Line 1: | ||

---- | ---- | ||

'''''Lead Author:''''' ''John Brtis'', '''''Contributing Authors:''''' ''Scott Jackson, | '''''Lead Author:''''' ''John Brtis'', '''''Contributing Authors:''''' ''Ken Cureton, Scott Jackson, Ivan Taylor'' | ||

---- | ---- | ||

Resilience is a relatively new term in the SE realm, appearing | Resilience is a relatively new term in the SE realm, appearing around 2006 and becoming popularized in 2010. The application of “resilience” to engineered systems has led to a proliferation of alternative definitions. While the details of definitions will continue to be debated, the information here should provide a working understanding of the meaning and implementation of resilience, sufficient for an engineer to address it effectively. | ||

==Overview== | ==Overview== | ||

===Definition=== | ===Definition=== | ||

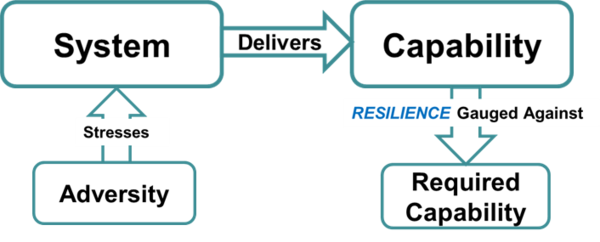

According to the Oxford English Dictionary | According to the Oxford English Dictionary, resilience is “the act of rebounding or springing back” (OED 1973). This definition fits materials that return to their original shape after deformation. For human-made or '''[[Engineered System (glossary)|engineered systems]]''', the definition of '''[[Resilience (glossary)|resilience]]''' can be extended to "maintaining '''[[Capability (glossary)|capability]]''' in the face of an [[Adversity (glossary)|'''adversity''']]." | ||

Some practitioners limit the definition of resilience to only the system reactions following an encounter with an adversity in what is known as the "reactive perspective" regarding system resilience. The "proactive perspective" defines system resilience to include actions that occur ''before'' encountering adversity as well. The INCOSE Resilient Systems Working Group (RSWG) asserts that resilience should address both the reactive and proactive perspectives. | |||

The RSWG defines resilience as "the ability to provide required capability when facing adversity", as depicted in '''Figure 1'''. [[File:System Resilience Figure 1.png|thumb|center|600px|'''Figure 1. General Depiction of Resilience''' (Brtis & McEveley 2016, Used with Permission)]] | |||

===Scope of the Means=== | ===Scope of the Means=== | ||

In applying this definition, one needs to consider the range of means by which resilience is achieved | In applying this definition, one needs to consider the range of means by which resilience is achieved. The high-level means, and fundamental objectives of resilience include avoiding, withstanding, and recovering from adversity (Brtis and McEvilley 2019). “Withstanding” and “recovering” from adversity are classic aspects of resilience. For the purpose of engineered systems, “avoiding” adversity is also an important means of achieving resilience (Jackson and Ferris 2016). Finer granularity low-level means of achieving resilience are discussed in the taxonomy portion of this article. | ||

===Scope of the Adversity=== | ===Scope of the Adversity=== | ||

An adversity is any condition that could degrade the delivered capability of a system. When discussing system resilience, the full spectrum of sources and types of adversity should be considered. For example, adversity may come from within the system or from the system’s environment, and can be expected or unexpected. Adversity may also come from opponents, friendlies or neutral parties, and if from human sources, can be malicious or accidental (Brtis & McEvilley 2020). | |||

“Stress” and “strain” are two useful concepts for understanding adversities. Adversities may affect the system through a causal chain of adversities. The proximate adversities that affect the system directly (rather than indirectly) are called “stresses” on the system. The effects on the system are referred to as “strains” on the system. | |||

It is important to recognize that risks that can impact a system in the future can cause a detrimental [[Strain (glossary)|'''strain''']] on the system in the present. Systems should be designed to be resilient to future risks, just as they are to current issues. | |||

==Taxonomy for Achieving Resilience== | ==Taxonomy for Achieving Resilience== | ||

A taxonomy | A taxonomy describing the fundamental objectives of resilience and the means for achieving those objectives is valuable to the engineer developing a resilient design. It is important to distinguish “fundamental objectives” from “means objectives” and their impact on trades and engineering decision-making (Clemen & Reilly 2001; Keeney 1992). | ||

A three-layer objectives-based taxonomy that implements this distinction is provided below. The first level addresses the fundamental objectives of resilience, the second level addresses the means objectives of resilience, and the third level addresses architecture, design, and operational techniques for achieving resilience. The three layers are related by many-to-many relationships. The terms and their descriptions are, in many cases, tailored to best address the context of resilience. (Most of the taxonomy content came from Brtis (2016), Jackson and Ferris (2013), Winstead (2020), with other content developed over time by the RSWG.) | |||

=== Taxonomy Layer 1: The Fundamental Objectives of Resilience === | === Taxonomy Layer 1: The Fundamental Objectives of Resilience === | ||

''Fundamental'' objectives are the first level decomposition of resilience objectives. They establish the scope of resilience. They identify the values pursued by resilience. They represent an extension of the definition of resilience. They are ends in themselves rather than just means to other ends. They should be relatively immutable. Being resilient means achieving three fundamental objectives: | ''Fundamental'' ''objectives'' are the first level decomposition of resilience objectives. They establish the scope of resilience. They identify the values pursued by resilience. They represent an extension of the definition of resilience. They are ends in themselves rather than just means to other ends. They should be relatively immutable. Being resilient means achieving three fundamental objectives: | ||

* '''Avoid adversity:''' eliminate or reduce exposure to [[Stress (glossary)|'''stress''']] | |||

* '''Withstand adversity:''' resist capability degradation when stressed | |||

* '''Recover from adversity:''' replenish lost capability after degradation | |||

These ''fundamental'' objectives can be achieved by pursuing ''means'' objectives. Means objectives are not ends in themselves. Their value resides in helping to achieve the three fundamental objectives. | These ''fundamental'' objectives can be achieved by pursuing ''means'' objectives. Means objectives are not ends in themselves. Their value resides in helping to achieve the three fundamental objectives. | ||

=== Taxonomy Layer 2: Means Objectives === | === Taxonomy Layer 2: Means Objectives === | ||

''Means objectives'' are not ends in themselves but enable achieving the fundamental objectives in Layer 1. This level tends to reflect the stakeholder perspective. The means objectives are high-level functional requirements that should be considered during the stakeholder needs and requirements definition process. The definitions and descriptions are specific to how each concept is applied for resilience. | |||

* '''adapt:''' be or become able to deliver required capability in changing situations. This allows systems to function in changing conditions. Adaptability address known-unknowns, and potentially, unknown-unknowns. It may be real-time, near-term, or long-term. The system may adapt itself or be adapted by external actors. The driving situation changes may be in the adversities, environment, system state, required capability, mission, business, stakeholders, stakeholder needs and requirements, system requirements, system architecture, or design. Adaptability is related to [[Flexibility (glossary)|'''flexibility''']], [[Agility (glossary)|'''agility''']], and evolution, and serves as a complement to the “preserve integrity” objective. (See the entry for "evolve" below as well.) Examples of adaptability include adding a crumple zone to an automobile, streaming services shifting to different modalities when faced by unexpectedly high loads or anomalies, self-healing systems, etc. | |||

* '''anticipate:''' (1) consider and understand potential adversities, their consequences, and appropriate responses -- before the system is stressed; and/or (2) develop and maintain courses of design and operation that address predicted adversity. Anticipation facilitates the mitigation of risks before they manifest. The "anticipate" objective is similar to the “prepare” objective. Examples include use of prognostic data, predictive maintenance, hurricane early warning systems, and emergency response planning. | |||

* '''constrain:''' withstand stress by limiting damage propagation within the system. Examples include fault containment, circuit breakers, fire walls, multiple independent levels of security, vaccines, amputation, etc. | |||

* '''continue:''' withstand and recover from stress to deliver required capability, while and after being stressed. Examples include fail-safe, backup capability, redundant capability, fault tolerance, rapid recovery, etc. | |||

* '''degrade gracefully:''' withstand stress by transitioning to desirable system state(s) after degradation. This allows partial useful functionality instead of complete system failure. Examples include automobile crumple zone absorption of kinetic energy, deorbiting a satellite, safety system engagement, and failing over to backup systems. | |||

* '''disaggregate:''' disperse functions, systems, or system elements. This eliminates a single point of failure and eliminates a single target for opponents. Examples include processor load distribution, mechanical/electrical/thermal load distribution, networks, the nuclear triad, etc. | |||

* '''evade:''' avoid adversity through design or action. Examples include stealth and maneuver. | |||

* '''evolve:''' adapt over time. Examples include: B-52 transition to multiple roles, upgrading software-defined radio capabilities, and adoption of fuel injection to address fuel economy needs. | |||

* '''fortify:''' strengthen the system to withstand stress. Examples include shielding, strengthening weakest “links,” and protective aircraft cockpits. | |||

* '''manage complexity:''' reduce adversity or its effects by eliminating unnecessary complexity and the resulting unintended consequences. Unintended consequences can be or can create an adversity, or they may cause an inappropriate response to adversity. Examples include the principle of “Keep it Simple Stupid” and suppressing undesirable emergent behavior. | |||

* '''minimize adversity:''' avoid adversity by reducing the amount or effectiveness of adversities. Examples include battlefield preparation, and fighting an infection (biological). | |||

* '''minimize faults:''' avoid adversity by reducing the likelihood and severity of adversities arising within the system, such as by using system elements with a long mean time to failure. | |||

* '''monitor:''' observe and evaluate changes or developments that could lead to degradation. This allows projection and anticipation of future status, to allow early detection and early response. An example would be smart grid real-time power monitoring. | |||

* '''preserve integrity:''' withstand adversity by remaining complete and unaltered. This is a compliment to “adapt/evolve.” Examples include blockchain, which provides tamper-proof data logging and cryptographic digital signatures. | |||

* '''prevent:''' avoid degradation by precluding the realization of strain on the system. Examples include backup power to serve critical system capability, anti-corrosion coatings, etc. | |||

* '''reduce vulnerability''': better withstand adversity by identifying system vulnerabilities and modifying the system to reduce the degradation caused by adversity. Examples include performing and acting on Failure Modes and Effects Analysis (FMEA), reducing the attack surface, hardening vulnerable components, and flood-resistant urban infrastructures. | |||

* | * '''repair:''' recover by fixing damage. | ||

** | * '''replace:''' recover by substituting a capable element for a degraded one. | ||

* '''tolerate:''' withstand degraded capability. Examples include excess margin, RAID storage, an aircraft hydraulic redundancy. | |||

* '''understand:''' develop and maintain useful representations of required system capabilities, how those capabilities are generated, the system environment, and the potential for degradation due to adversity. Examples include digital twins, and battlefield simulations. | |||

=== Taxonomy Layer 3: Architecture, Design, and Operational Techniques to Achieve Resilience Objectives === | === Taxonomy Layer 3: Architecture, Design, and Operational Techniques to Achieve Resilience Objectives === | ||

Architecture, design, and operational techniques that may achieve resilience objectives | Architecture, design, and operational techniques that may achieve resilience objectives are listed below. This level tends to represent the system viewpoint, and should be considered during the system requirements, system architecture, design definition, and operation processes. The definitions and descriptions are specific to how each concept is applied for resilience. | ||

*'''absorption:''' withstanding adversity by assimilating stress without unacceptable degradation of the system capability. Examples include dissipating kinetic energy with automobile crumple zones. | |||

*'''adaptability''': see “adapt” in layer 2 above. Adaptability has commonality with flexibility, agility, improvising, overcoming. | |||

* '''anomaly detection:''' discovering salient abnormalities in the system or its environment to enable effective response. | |||

* '''backward recovery:''' recovery to a previous stable state of a system. | |||

* '''boundary enforcement:''' implementing the process, temporal, and spatial limits to protect the system. | |||

** '''boundary enforcement:''' implementing the process, temporal, and spatial limits | * '''buffering:''' reducing the effect of degradation through excess capacity. | ||

* '''coordinated defense:''' having multiple, synergistic mechanisms to protect required capability, such as via the defense-in-depth strategy. | |||

* '''deception:''' confusing and thus impeding an adversary. | |||

* '''defense-in-depth:''' minimizing loss by employing multiple coordinated mechanisms, each of which can at least partially achieve resilience. | |||

* '''detection avoidance:''' reducing an adversary's awareness of the system. Examples include stealth. | |||

* '''distributed privilege:''' requiring multiple authorized entities to act in a coordinated manner before a system function can proceed. Examples include authorization for use of nuclear weapons. | |||

* '''distribution:''' spreading the system’s ability to perform – physically or virtually. | |||

* '''diversification:''' use of heterogeneous design techniques to minimize common vulnerabilities and common mode failures. Examples include heterogeneous technologies, data sources, processing locations, equipment locations, supply chains, communications paths. | |||

* '''domain separation:''' physically or logically isolating items with different protection needs. | |||

* '''drift correction:''' monitoring the system’s movement toward the boundaries of proper operation and taking corrective action. | |||

* '''dynamic positioning:''' relocation of system functionality or components to confound opponent understanding of the system. | |||

* '''effect tolerance:''' providing required capability despite damage to the system. | |||

* '''error recovery:''' detection, control, and correction of internal errors. | |||

* '''fail soft:''' prioritizing and gradually terminating affected functions when failure is imminent. | |||

* '''fault tolerance:''' providing required capability in spite of faults. | |||

* '''forward recovery:''' recovery by restoring the system to a new, not previously occupied state in which it can perform required functions. | |||

* '''human participation:''' including people as part of the system. A human in the loop brings a unique capability for agile and adaptable thinking and action. | |||

* '''least functionality:''' system elements accomplish their required functions, but no more. | |||

* '''least persistence:''' system elements are available, accessible, and fulfill their design intent only while needed. | |||

* '''least privilege:''' system elements are allocated authorizations necessary to accomplish their specified functions, but not more. | |||

* '''least sharing:''' system resources are accessible by multiple system elements only when necessary, and to as few system elements as possible. | |||

* '''loose coupling''': minimizing the interdependency of elements. This can reduce the potential for propagation of damage. | |||

* '''loss margins:''' excess capability, so partial capability degradation is acceptable. | |||

* '''maintainability:''' ability to be retained or restored to perform as required. | |||

* '''mediated access:''' controlling the ability to access and use system elements. | |||

* '''modeling and analytic monitoring:''' developing a representation of the system; and gathering, and analyzing data based on that understanding, to identify vulnerabilities, find indications of potential or actual adverse conditions, identify potential or actual system degradation and evaluate the efficacy of system countermeasures. | |||

* '''modularity:''' composing a system of discrete elements so that a change to one component has a minimal impact on other elements. | |||

** '''modularity:''' | * '''neutral state:''' providing a condition of the system where stakeholders (especially operators) can safely take no action while awaiting determination of the most appropriate action. | ||

* '''protection:''' mitigation of stress to the system. | |||

* '''protective defaults:''' providing default configurations of the system that provide protection. | |||

* '''protective failure:''' ensuring that failure of a system element does not result in an unacceptable loss of capability. | |||

* '''protective recovery:''' ensuring that recovery of a system element does not result in unacceptable loss of capability. | |||

* '''realignment:''' reconfigure the system architecture to improve the system’s resilience. | |||

* '''rearchitect/redesign:''' Modify the system elements or system structure for improved resilience. | |||

* '''redeploy:''' reorganize resources to provide required capabilities and address adversity or degradation. Examples include rapid launch to replace satellites lost from a constellation, emergency vehicle repositioning during disasters. | |||

* '''redundancy:''' having more than one means for performing the required function. This can mitigate single point failures. Examples include: multiple pumps in parallel. | |||

* '''redundancy (functional):''' achieving redundancy by heterogeneous means. This can mitigate common mode failures and common case failures. This is also called, “homogeneous redundancy.” | |||

* '''redundancy (physical):''' achieving redundancy by more than one identical element. | |||

* '''repairability:''' the ease with which a system can be restored to an acceptable condition. | |||

* '''replacement:''' changing system elements to regain capability. | |||

* '''safe state:''' providing the ability to transition to a state that does not lead to unacceptable loss of capability. | |||

* '''segmentation:''' separation (logically or physically) of elements to limit the spread of damage. | |||

* '''shielding:''' interposition of items (physical or virtual) that inhibit the adversity’s ability to stress the system. | |||

* '''substantiated integrity''': ability to ensure and prove that system elements have not been corrupted. | |||

* '''substitution:''' using new system elements to provide or restore capability. | |||

* '''unpredictability:''' making changes randomly that confound an opponent’s understanding of the system. | |||

* '''virtualization''': creating a simulated, rather than actual, version of something. This facilitates stealth, dynamic positioning, and unpredictability. Examples include virtual computer hardware platforms, storage devices, and network resources. | |||

The means objectives and architectural and design techniques will evolve as the resilience engineering discipline matures. | |||

==The Resilience Process== | |||

Resilience should be considered throughout a system's life cycle, but most especially in early life cycle activities that lead to resilience requirements. Once resilience requirements are established, they can and should be managed along with all the other requirements in the trade space. Specific considerations for inclusion in early life cycle activities can include the following items listed below (Brtis and McEvilley 2019). | |||

Resilience should be considered throughout | |||

* '''Business or Mission Analysis Process''' | * '''Business or Mission Analysis Process''' | ||

** Defining the problem space should include identification of adversities and expectations for performance under those adversities. | ** Defining the problem space should include identification of adversities and expectations for performance under those adversities. | ||

** ConOps, OpsCon, and solution classes should consider the ability to avoid, withstand, and recover from | ** ConOps, OpsCon, and solution classes should consider the ability to avoid, withstand, and recover from adversities. | ||

** Evaluation of alternative solution classes must consider ability to deliver required capabilities under adversity. | ** Evaluation of alternative solution classes must consider ability to deliver required capabilities under adversity. | ||

* '''Stakeholder Needs and Requirements Definition Process''' | * '''Stakeholder Needs and Requirements Definition Process''' | ||

** The stakeholder set should include | ** The stakeholder set should include people who understand potential adversities and stakeholder resilience needs. | ||

** When identifying stakeholder needs, identify expectations for capability under adverse conditions and degraded/alternate, but useful, modes of operation. | ** When identifying stakeholder needs, identify expectations for capability under adverse conditions and degraded/alternate, but useful, modes of operation. | ||

** Operational concept scenarios should include resilience scenarios. | ** Operational concept scenarios should include resilience scenarios. | ||

| Line 134: | Line 134: | ||

* '''System Requirements Definition Process''' | * '''System Requirements Definition Process''' | ||

** Resilience should be considered in the identification of requirements. | ** Resilience should be considered in the identification of requirements. | ||

** Achieving resilience and other adversity-driven considerations should be addressed | ** Achieving resilience and other adversity-driven considerations should be addressed in concert. | ||

* '''Architecture Definition Process''' | *'''Architecture Definition Process''' | ||

** Selected viewpoints should support the representation of resilience. | ** Selected viewpoints should support the representation of resilience. | ||

** Resilience requirements can significantly limit and guide the range of acceptable architectures. | ** Resilience requirements can significantly limit and guide the range of acceptable architectures. Resilience requirements must be mature when used for architecture selection. | ||

** Individuals developing candidate architectures should be familiar with architectural techniques for achieving resilience. | ** Individuals developing candidate architectures should be familiar with architectural techniques for achieving resilience. | ||

** Achieving resilience and other adversity-driven considerations should be addressed | ** Achieving resilience and other adversity-driven considerations should be addressed in concert. | ||

* '''Design Definition Process''' | *'''Design Definition Process''' | ||

** Individuals developing candidate designs should be familiar with design techniques for achieving resilience. | ** Individuals developing candidate designs should be familiar with design techniques for achieving resilience. | ||

** Achieving resilience and the other adversity-driven considerations should be addressed | ** Achieving resilience and the other adversity-driven considerations should be addressed in concert. | ||

* '''Risk Management Process''' | *'''Risk Management Process''' | ||

** Risk management should be planned to handle risks, issues, and opportunities identified by resilience activities. | ** Risk management should be planned to handle risks, issues, and opportunities identified by resilience activities. | ||

==Resilience Metrics== | |||

Commonly used resilience metrics include the following (Uday and Marais 2015): | |||

* Time duration of failure | |||

* Time duration of recovery | |||

* Ratio of performance recovery to performance loss | |||

* The speed of recovery | |||

* Performance before and after the disruption and recovery actions | |||

* System importance measures | |||

However, resilience principles that are implied or substantiated by recommendations by domain experts are often missing in practice (Jackson and Ferris 2013). This has led to the development of a new metric for evaluating various systems in domains (aviation, fire protection, rail, and power distribution) to address resilience principles that are often omitted (Jackson 2016). | |||

Numerous candidate resilience metrics have also been identified, including the following (Brtis 2016): | |||

* Maximum outage period | |||

* Maximum brownout period | |||

* Maximum outage depth | |||

* Expected value of capability: the probability-weighted average of capability delivered | |||

* Threat resiliency; i.e. the time integrated ratio of the capability provided divided by the minimum needed capability | |||

* Expected availability of required capability; i.e. the likelihood that for a given adverse environment the required capability level will be available | |||

* Resilience levels; i.e. the ability to provide required capability in a hierarchy of increasingly difficult adversity | |||

* Cost to the opponent | |||

* Cost-benefit to the opponent | |||

* Resource resiliency; i.e. the degradation of capability that occurs as successive contributing assets are lost | |||

Multiple metrics may be required depending on the situation. If one has to select a single most effective metric for reflecting the meaning of resilience, consider "the expected availability of the required capability", where the probability-weighted availability is summed across the scenarios under consideration (Brtis 2016). | |||

==Resilience Requirements== | ==Resilience Requirements== | ||

Resilience requirements often take the form of resilience scenarios, which can often be found in ConOps or OpsCons. | |||

The following information is often part of a resilience requirement: | The following information is often part of a resilience requirement (Brtis & McEvilley 2019): | ||

* operational concept name | * operational concept name | ||

* system or system portion of interest | * system or system portion of interest | ||

* capability(s) of interest their metric(s) and units | * capability(s) of interest their metric(s) and units | ||

* target value(s); i.e., the required amount of | * target value(s); i.e., the required amount of capabilities | ||

* system modes of operation during the scenario, e.g., operational, training, exercise, maintenance, and update | * system modes of operation during the scenario, e.g., operational, training, exercise, maintenance, and update | ||

* system states expected during the scenario | * system states expected during the scenario | ||

* adversity(s) being considered, their source, and type | * adversity(s) being considered, their source, and type | ||

* potential stresses on the system, their metrics, units, and values (Adversities may affect the system | * potential stresses on the system, their metrics, units, and values (Adversities may affect the system directly or indirectly. Stresses are adversities that directly affect the system.) | ||

* resilience related scenario constraints, e.g., cost, schedule, policies, and regulations | * resilience related scenario constraints, e.g., cost, schedule, policies, and regulations | ||

* timeframe and sub-timeframes of interest | * timeframe and sub-timeframes of interest | ||

* resilience metric, units, determination methods, and resilience metric target | * resilience metric, units, determination methods, and resilience metric target | ||

Importantly, many of these parameters may vary over the timeframe of the scenario (see Figure 2). | * impact on other systems if the system of interest fails its resilience goals | ||

Importantly, many of these parameters may vary over the timeframe of the scenario (see '''Figure 2'''). A single resilience scenario may involve multiple adversities, which may be involved at multiple times during the scenario. | |||

[[File:Time-Wise_Values_of_Notional_Resilience_Scenarios_Parameters.PNG|thumb|center|600px|'''Figure 2. Time-Wise Values of Notional Resilience Scenarios Parameters.''' (Brtis et al. 2021, Used with Permission)]] | [[File:Time-Wise_Values_of_Notional_Resilience_Scenarios_Parameters.PNG|thumb|center|600px|'''Figure 2. Time-Wise Values of Notional Resilience Scenarios Parameters.''' (Brtis et al. 2021, Used with Permission)]] | ||

Resilience requirements are compound requirements. They can be classified into three forms: (1) natural language, (2) entity-relationship diagram (data structure), and (3) an extension to SysML. All contain the same information, but are in forms that meet the needs of different audiences (Brtis et al. 2021). An example of a natural language pattern for representing a resilience requirement is: | |||

<blockquote>The <system, mode(t), state(t)> encountering <adversity(t), source, type>, which imposes <stress(t), metric, units, value(t)> thus affecting delivery of <capability(t), metric, units> during <scenario timeframe, start time, end time, units> and under <scenario constraints>, shall achieve <resilience target(t) (include excluded effects)> for <resilience metric, units, determination method></blockquote> | |||

== Affordable Resilience == | == Affordable Resilience == | ||

"Affordable | "Affordable resilience" means achieving an effective balance between cost and system resilience over the system life cycle. Life cycle considerations for affordable resilience should address not only risks and issues associated with known and unknown adversities over time, but also opportunities for gain in known and unknown future environments. This may require balancing the time value of funding vs. the time value of resilience in order to achieve affordable resilience as shown in '''Figure 3'''. | ||

[[File:SystemResilience_Figure_2_Brtis_AffordableResilience.png|thumb|center|500px|'''Figure 3. Resilience versus Cost.''' (Wheaton 2015, Used with Permission)]] | [[File:SystemResilience_Figure_2_Brtis_AffordableResilience.png|thumb|center|500px|'''Figure 3. Resilience versus Cost.''' (Wheaton 2015, Used with Permission)]] | ||

Once | Once affordable levels of resilience are determined for each key technical attribute, the affordable levels across those attributes can be prioritized via standard techniques, such as Multi-attribute Utility Theory (MAUT) (Keeney and Raiffa 1993) or Analytical Hierarchy Process (AHP) (Saaty 2009). | ||

The priority of affordable resilience attributes for systems is typically domain-dependent | The priority of affordable resilience attributes for systems is typically domain-dependent. For example: | ||

* Electronic funds transfer systems may emphasize transaction security, meeting regulatory requirements, and liability risks | |||

* Electronic funds transfer systems may emphasize | |||

* Unmanned space exploration systems may emphasize survivability to withstand previously-unknown environments, usually with specified (and often limited) near-term funding constraints | * Unmanned space exploration systems may emphasize survivability to withstand previously-unknown environments, usually with specified (and often limited) near-term funding constraints | ||

* Electrical power grids may emphasize safety, reliability, and meeting regulatory requirements together with adaptability to | * Electrical power grids may emphasize safety, reliability, and meeting regulatory requirements together with adaptability to changing power generation and storage technologies, shifts in power distribution and usage, etc. | ||

* Medical capacity for disasters may emphasize rapid adaptability | * Medical capacity for disasters may emphasize rapid adaptability, with affordable planning, preparation, response, and recovery levels to meet public health demands. This emphasis must balance potential liability with medical practices, such as triage and “first do no harm”. | ||

== Discipline Relationships == | == Discipline Relationships == | ||

Resilience has commonality and synergy with | Resilience has commonality and synergy with many other quality characteristics. Examples include availability, environmental impact, survivability, maintainability, reliability, operational risk management, safety, security, and quality. This group of quality characteristics forms what is called [[Loss-Driven Systems Engineering (glossary)|loss-driven systems engineering]] (LDSE), because they focus on potential losses involved in developing and using systems (INCOSE 2020). These areas frequently share the assets considered, losses considered, adversities considered, requirements, and architectural, design and operational techniques. It is imperative that these areas work closely with one another and share information and decision-making. | ||

The concept of pursuing loss-driven systems engineering, its benefits, and the means by which it can be pursued are addressed in the [[A Framework for Viewing Quality Attributes from the Lens of Loss|SEBoK Part 6 article focused on LDSE]] and the 2020 INCOSE INSIGHT issue on LDSE. | |||

==Discipline Standards== | ==Discipline Standards== | ||

| Line 191: | Line 220: | ||

==Personnel Considerations== | ==Personnel Considerations== | ||

People are important components of systems for which resilience is desired. This aspect is reflected in the human in the loop technique | People are important components of systems for which resilience is desired. This aspect is reflected in the human-in-the-loop technique. (Jackson and Ferris 2013). A human in the loop brings a unique capability for agile and adaptable thinking and action. Decisions made by people are at the discretion of the decision-makers in real time, as exemplified by the Apollo 11 mission (Eyles 2009) and similar examples. | ||

== Organizational Resilience == | == Organizational Resilience == | ||

Because organizational systems and cyber-physical systems differ | Because organizational systems and cyber-physical systems differ significantly, it is not surprising that resilience is addressed differently in each. Organizations, as systems, typically view resilience in terms of managing continuity of operations when facing adverse events and apply a host of processes focused on ensuring that the organization’s core functions can withstand disruptions, interruptions, and adversities. ISO 22301 addresses requirements for security and resilience in business continuity management systems. It is an international standard that provides requirements appropriate to the amount and type of impact the organization may or may not accept following a disruption. | ||

Resilient organizations require resilient employees. Resilient organizations and resilient people generally have the following characteristics: (1) they accept the harsh realities facing them, (2) they find meaning in terrible times, and (3) they are creative under pressure, making do with whatever is at hand (Coutu 2002). | |||

'' | Resilient organizations strive to achieve ''strategic resilience'', i.e., "the ability to dynamically reinvent business models and strategies as circumstances change…and to change before the need becomes desperately obvious" (Hamel & Valiikangas 2003). An organization with this capability constantly remakes its future rather than defending its past. | ||

Organizational resilience can be measured based on three dimensions: (1) the level of organizational situational awareness, (2) management of organizational vulnerabilities, and (3) organizational adaptive capacity (Lee, Vargo, & Seville 2013). These three items seem well accepted and appear in some form in many papers. | |||

'' | Preparing for the unknown is a recurring challenge of resilience. Organizations must frequently deal with adversities that were previously unknown or unknowable by developing ''strategic'' ''foresight''. Strategic foresight can be developed by adopting the practice of scenario planning, where multiple adverse futures are envisioned, countermeasures are developed, and coping strategies that appear most frequently are deemed “robust” and candidates for action (Scoblic et. al. 2020). This process also trains personnel to better deal with emerging adversity. | ||

==References== | ==References== | ||

===Works Cited=== | ===Works Cited=== | ||

Adams, K. M., P.T. Hester, J.M. Bradley, T.J. Meyers, | Adams, K. M., P.T. Hester, J.M. Bradley, T.J. Meyers, & C.B. Keating. 2014. "Systems Theory as the Foundation for Understanding Systems." ''Systems Engineering,'' 17(1):112-123. | ||

ASISl. 2009. Organizational Resilience: Security, Preparedness, and Continuity Management Systems--Requirements With Guidance for Use. Alexandria, VA, USA: ASIS International. | ASISl. 2009. Organizational Resilience: Security, Preparedness, and Continuity Management Systems--Requirements With Guidance for Use. Alexandria, VA, USA: ASIS International. | ||

| Line 253: | Line 242: | ||

Billings, C. 1997. ''Aviation Automation: The Search for Human-Centered Approach.'' Mahwah, NJ: Lawrence Erlbaum Associates. | Billings, C. 1997. ''Aviation Automation: The Search for Human-Centered Approach.'' Mahwah, NJ: Lawrence Erlbaum Associates. | ||

Boehm, B. 2013. ''Tradespace and Affordability – Phase 2 Final Technical Report.'' December 31 2013, Stevens Institute of Technology Systems Engineering Research Center, SERC-2013-TR-039-2. Accessed April 2, 2021. Available at https://apps.dtic.mil/dtic/tr/fulltext/u2/a608178.pdf. | Boehm, B. 2013. ''Tradespace and Affordability – Phase 2 Final Technical Report.'' December 31 2013, Stevens Institute of Technology Systems Engineering Research Center, SERC-2013-TR-039-2. Accessed April 2, 2021. Available at <nowiki>https://apps.dtic.mil/dtic/tr/fulltext/u2/a608178.pdf</nowiki>. | ||

Browning, T.R. 2014. "A Quantitative Framework for Managing Project Value, Risk, and Opportunity." ''IEEE Transactions on Engineering Management.'' | Browning, T.R. 2014. "A Quantitative Framework for Managing Project Value, Risk, and Opportunity." ''IEEE Transactions on Engineering Management.'' 61(4): 583-598, Nov. 2014. doi: 10.1109/TEM.2014.2326986. | ||

Brtis, J.S. 2016. ''How to Think About Resilience in a DoD Context: A MITRE Recommendation.'' | Brtis, J.S. 2016. ''How to Think About Resilience in a DoD Context: A MITRE Recommendation.'' MITRE Corporation, Colorado Springs, CO. MTR 160138, PR 16-20151, | ||

Brtis, J.S. | Brtis, J.S. & M.A. McEvilley. 2019. ''Systems Engineering for Resilience.'' The MITRE Corporation. MP 190495. Accessed April 2, 2021. Available at <nowiki>https://www.researchgate.net/publication/334549424_Systems_Engineering_for_Resilience</nowiki>. | ||

Brtis, J.S., M.A. McEvilley, | Brtis, J.S. & M.A. McEvilley. 2020. “Unifying Loss-Driven Systems Engineering Activities,” ''INCOSE Insight.'' 23(4): 7-33. Accessed May 7, 2021. Available at <nowiki>https://onlinelibrary.wiley.com/toc/21564868/2020/23/4</nowiki>. | ||

Brtis, J.S., M.A. McEvilley, & M.J. Pennock. 2021. “Resilience Requirements Patterns.” ''Proceedings of the INCOSE International Symposium,'' July 17-21, 2021. | |||

Checkland, P. 1999. ''Systems Thinking, Systems Practice''. New York, NY: John Wiley & Sons. | Checkland, P. 1999. ''Systems Thinking, Systems Practice''. New York, NY: John Wiley & Sons. | ||

Clemen, Robert T. | Clemen, Robert T. & T. Reilly. 2001. ''Making Hard Decisions, with DecisionTools.'' Duxbury Press, Pacific Grove, CA. | ||

Coutu, Diane L., “How Resilience Works”. Harvard Business Review 80(5):46-50, 2002. | Coutu, Diane L., “How Resilience Works”. Harvard Business Review 80(5):46-50, 2002. | ||

Cureton, Ken. 2023. ''About Resilience Engineering in (and of) Digital Engineering''. Presented to the Defense Acquisition University, February 23, 2023. Accessed October 2, 2023. Available at https://media.dau.edu/media/t/1_xfmk3vyh. | Cureton, Ken. 2023. ''About Resilience Engineering in (and of) Digital Engineering''. Presented to the Defense Acquisition University, February 23, 2023. Accessed October 2, 2023. Available at <nowiki>https://media.dau.edu/media/t/1_xfmk3vyh</nowiki>. | ||

DHS. 2017. ''Instruction Manual 262-12-001-01 DHS Lexicon Terms and Definitions 2017 Edition – Revision 2.'' US Department of Homeland Security. Accessed April 2, 2021. Available at https://www.dhs.gov/sites/default/files/publications/18_0116_MGMT_DHS-Lexicon.pdf. | DHS. 2017. ''Instruction Manual 262-12-001-01 DHS Lexicon Terms and Definitions 2017 Edition – Revision 2.'' US Department of Homeland Security. Accessed April 2, 2021. Available at <nowiki>https://www.dhs.gov/sites/default/files/publications/18_0116_MGMT_DHS-Lexicon.pdf</nowiki>. | ||

Eyles, D. 2009. "1202 Computer Error Almost Aborted Lunar Landing." Massachusetts Institute of Technology, MIT News. Accessed April 2, 2021. Available http://njnnetwork.com/2009/07/1202-computer-error-almost-aborted-lunar-landing/. | Eyles, D. 2009. "1202 Computer Error Almost Aborted Lunar Landing." Massachusetts Institute of Technology, MIT News. Accessed April 2, 2021. Available <nowiki>http://njnnetwork.com/2009/07/1202-computer-error-almost-aborted-lunar-landing/</nowiki>. | ||

Hamel, G. | Hamel, G. & L. Valikangas. 2003. “The Quest for Resilience,” ''Harvard Business Review'', 81(9):52-63. | ||

Hitchins, D. 2009. "What are the General Principles Applicable to Systems?" ''INCOSE Insight.'' 12(4):59-6359-63. Accessed April 2, 2021. Available | Hitchins, D. 2009. "What are the General Principles Applicable to Systems?" ''INCOSE Insight.'' 12(4):59-6359-63. Accessed April 2, 2021. Available athttps://onlinelibrary.wiley.com/doi/abs/10.1002/inst.200912459. | ||

Hollnagel, E., D. Woods, | Hollnagel, E., D. Woods, & N. Leveson (eds). 2006. ''Resilience Engineering: Concepts and Precepts.'' Aldershot, UK: Ashgate Publishing Limited. | ||

INCOSE. 2015. | INCOSE. 2015. ''Systems Engineering Handbook, a Guide for System Life Cycle Processes and Activities''. New York, NY, USA: John Wiley & Sons. | ||

INCOSE. 2020. | INCOSE. 2020. "Special Feature: Loss-Driven Systems Engineering," ''INCOSE Insight.'' 23(4): 7-33. Accessed May 7, 2021. Available at <nowiki>https://onlinelibrary.wiley.com/toc/21564868/2020/23/4</nowiki>. | ||

Jackson, S. | Jackson, S. & T. Ferris. 2013. "Resilience Principles for Engineered Systems." ''Systems Engineering.'' 16(2):152-164. doi:10.1002/sys.21228. | ||

Jackson, S. | Jackson, S. & T. Ferris. 2016. Proactive and Reactive Resilience: A Comparison of Perspectives. Accessed April 2, 2021. Available at <nowiki>https://www.academia.edu/34079700/Proactive_and_Reactive_Resilience_A_Comparison_of_Perspectives</nowiki>. | ||

Jackson, W.S. 2016. Evaluation of Resilience Principles for Engineered Systems. Unpublished PhD, University of South Australia, Adelaide, Australia. | Jackson, W.S. 2016. Evaluation of Resilience Principles for Engineered Systems. Unpublished PhD, University of South Australia, Adelaide, Australia. | ||

Keeney, R.L. 1992. ''Value-Focused Thinking, a Path to Creative | Keeney, R.L. 1992. ''Value-Focused Thinking, a Path to Creative Decision-making'', Harvard University Press Cambridge, Massachusetts. | ||

Keeney, R.L. | Keeney, R.L. & H. Raiffa. 1993. ''Decisions with Multiple Objectives.'' Cambridge University Press. | ||

Lee, A.V., J. Vargo, | Lee, A.V., J. Vargo, & E. Seville. 2013. "Developing a tool to measure and compare organizations’ resilience". ''Natural Hazards Review'', 14(1):29-41. | ||

Madni, A. | Madni, A. & S. Jackson. 2009. "Towards a conceptual framework for resilience engineering." ''IEEE'' ''Systems Journal.'' 3(2):181-191. | ||

Neches, R. | Neches, R. & A.M. Madni. 2013. "Towards affordably adaptable and effective systems". ''Systems Engineering'', 16: 224-234. doi:10.1002/sys.21234. | ||

OED. 1973. The Shorter Oxford English Dictionary on Historical Principles. edited by C. T. Onions. Oxford: Oxford | OED. 1973. The Shorter Oxford English Dictionary on Historical Principles. edited by C. T. Onions. Oxford: Oxford University Press. Original edition, 1933. | ||

Ross, R., M. McEvilley, J. Oren 2018. "Systems Security Engineering: Considerations for a Multidisciplinary Approach in the Engineering of Trustworthy Secure Systems." National Institute of Standards and Technology (NIST). SP 800-160 Vol. 1. Accessed April 2, 2021. Available at https://csrc.nist.gov/publications/detail/sp/800-160/vol-1/final. | Ross, R., M. McEvilley, J. Oren 2018. "Systems Security Engineering: Considerations for a Multidisciplinary Approach in the Engineering of Trustworthy Secure Systems." National Institute of Standards and Technology (NIST). SP 800-160 Vol. 1. Accessed April 2, 2021. Available at <nowiki>https://csrc.nist.gov/publications/detail/sp/800-160/vol-1/final</nowiki>. | ||

Saaty, T.L. 2009. ''Mathematical Principles of Decision Making.'' Pittsburgh, PA, USA: RWS Publications. | Saaty, T.L. 2009. ''Mathematical Principles of Decision Making.'' Pittsburgh, PA, USA: RWS Publications. | ||

| Line 309: | Line 300: | ||

Scoblic, J.P., A. Ignatius, D. Kessler, “Emerging from the Crisis'',” Harvard Business Review'', July, 2020. | Scoblic, J.P., A. Ignatius, D. Kessler, “Emerging from the Crisis'',” Harvard Business Review'', July, 2020. | ||

Sillitto, H.G. | Sillitto, H.G. & D. Dori. 2017. "Defining 'System': A Comprehensive Approach." ''Proceedings of the INCOSE International Symposium'' 2017, Adelaide, Australia. | ||

Uday, P. | Uday, P. & K. Morais. 2015. Designing Resilient Systems-of-Systems: A Survey of Metrics, Methods, and Challenges. ''Systems Engineering.'' 18(5):491-510. | ||

Warfield, J.N. 2008. "A Challenge for Systems Engineers: To Evolve Toward Systems Science." ''INCOSE Insight.'' 11(1). | Warfield, J.N. 2008. "A Challenge for Systems Engineers: To Evolve Toward Systems Science." ''INCOSE Insight.'' 11(1). | ||

Wheaton, M.J. | Wheaton, M.J. & A.M. Madni. 2015. "Resiliency and Affordability Attributes in a System Integration Tradespace", Proceedings of AIAA SPACE 2015 Conference and Exposition, 31 Aug-2 Sep 2015, Pasadena California. Accessed April 30, 2021. Available at: <nowiki>https://doi.org/10.2514/6.2015-4434</nowiki>. | ||

Winstead, M. 2020. “An Early Attempt at a Core, Common Set of Loss-Driven Systems Engineering Principles.” INCOSE INSIGHT, December 22-26. | Winstead, M. 2020. “An Early Attempt at a Core, Common Set of Loss-Driven Systems Engineering Principles.” INCOSE ''INSIGHT'', December 22-26. | ||

Winstead, M., D. Hild, | Winstead, M., D. Hild, & M. McEvilley. 2021. “Principles of Trustworthy Design of Cyber-Physical Systems.” MITRE Technical Report #210263, The MITRE Corporation, June 2021. Available: <nowiki>https://www.mitre.org/publications/technical-papers</nowiki>. | ||

===Primary References=== | ===Primary References=== | ||

Hollnagel, E., D.D. Woods, | Hollnagel, E., D.D. Woods, & N. Leveson (Eds.). 2006. [[Resilience Engineering: Concepts and Precepts|''Resilience Engineering: Concepts and Precepts'']]. Aldershot, UK: Ashgate Publishing Limited. | ||

Jackson, S. | Jackson, S. & T. Ferris. 2013. "[[Resilience Principles for Engineered Systems|''Resilience Principles for Engineered Systems'']]." ''Systems Engineering'', 16(2):152-164. | ||

Jackson, S., S.C. Cook, | Jackson, S., S.C. Cook, & T. Ferris. 2015. "[[Towards a Method to Describe Resilience to Assist in System Specification|''Towards a Method to Describe Resilience to Assist in System Specification'']]." ''Proceedings of the INCOSE International Symposium''. Accessed May 25, 2023. Available at <nowiki>https://www.researchgate.net/publication/277718256_Towards_a_Method_to_Describe_Resilience_to_Assist_System_Specification</nowiki>. | ||

Jackson, S. 2016. [[Principles for Resilient Design - A Guide for Understanding and Implementation]]. Accessed April 30, 2021. Available at https://www.irgc.org/irgc-resource-guide-on-resilience. | Jackson, S. 2016. [[Principles for Resilient Design - A Guide for Understanding and Implementation|''Principles for Resilient Design - A Guide for Understanding and Implementation'']]. Accessed April 30, 2021. Available at <nowiki>https://www.irgc.org/irgc-resource-guide-on-resilience</nowiki>. | ||

Madni, A. | Madni, A. & S. Jackson. 2009. [[Towards a conceptual framework for resilience engineering|''"Towards a conceptual framework for resilience engineering'']]." ''IEEE'' ''Systems Journal.'' 3(2):181-191. | ||

===Additional References=== | ===Additional References=== | ||

9/11 Commission. 2004. 9/11 Commission Report. National Commission on Terrorist Attacks on the United States. Accessed April 2, 2021. Available at https://9-11commission.gov/report/. | 9/11 Commission. 2004. 9/11 Commission Report. National Commission on Terrorist Attacks on the United States. Accessed April 2, 2021. Available at <nowiki>https://9-11commission.gov/report/</nowiki>. | ||

Ball, R. E. (2003). ''The Fundamentals of Aircraft Combat Survivability Analysis and Design''. 2nd edition. AIAA (American Institute of Aeronautics & Astronautics) Education series. (August 1, 2003) | |||

Billings, C. 1997. ''Aviation Automation: The Search for Human-Centered Approach''. Mahwah, NJ: Lawrence Erlbaum Associates. | Billings, C. 1997. ''Aviation Automation: The Search for Human-Centered Approach''. Mahwah, NJ: Lawrence Erlbaum Associates. | ||

Bodeau, D. K | Bodeau, D. K & R. Graubart. 2011. ''Cyber Resiliency Engineering Framework.'' The MITRE Corporation. MITRE Technical Report #110237. | ||

DoD. 1985. ''MIL-HDBK-268(AS) Survivability Enhancement, Aircraft Conventional Weapon Threats, Design and Evaluation Guidelines.'' US Navy, Naval Air Systems Command. | |||

Henry, D. | Henry, D. & E. Ramirez-Marquez. 2016. "On the Impacts of Power Outages during Hurricane Sandy – A Resilience Based Analysis." ''Systems Engineering.''19(1): 59-75. Accessed April 2, 2021. Available at <nowiki>https://onlinelibrary.wiley.com/doi/10.1002/sys.21338</nowiki>. | ||

Jackson, S., S.C. Cook, | Jackson, S., S.C. Cook, & T. Ferris. 2015. A Generic State-Machine Model of System Resilience. ''INCOSE Insight.'' 18(1):1 4-18. Accessed April 2, 2021. Available at <nowiki>https://onlinelibrary.wiley.com/doi/10.1002/inst.12003</nowiki>. Accessed on April 2, 2021. | ||

Leveson, N. 1995. Safeware: System Safety and Computers. Reading, Massachusetts: Addison Wesley. | Leveson, N. 1995. Safeware: System Safety and Computers. Reading, Massachusetts: Addison Wesley. | ||

Pariès, J. 2011. "Lessons from the Hudson." in ''Resilience Engineering in Practice: A Guidebook'', edited by E. Hollnagel, J. Pariès, D.D. Woods | Pariès, J. 2011. "Lessons from the Hudson." in ''Resilience Engineering in Practice: A Guidebook'', edited by E. Hollnagel, J. Pariès, D.D. Woods & J. Wreathhall, 9-27. Farnham, Surrey: Ashgate Publishing Limited. | ||

Perrow, C. 1999. ''Normal Accidents: Living With High Risk Technologies.'' Princeton, NJ: Princeton University Press. | Perrow, C. 1999. ''Normal Accidents: Living With High Risk Technologies.'' Princeton, NJ: Princeton University Press. | ||

Reason, J. 1997. ''Managing the Risks of | Reason, J. 1997. ''Managing the Risks of Organizational Accidents.'' Aldershot, UK: Ashgate Publishing Limited. | ||

Rechtin, E. 1991. ''Systems Architecting: Creating and Building Complex Systems''. Englewood Cliffs, NJ: CRC Press. | Rechtin, E. 1991. ''Systems Architecting: Creating and Building Complex Systems''. Englewood Cliffs, NJ: CRC Press. | ||

Schwarz, C.R. & H. Drake. 2001. ''Aerospace Systems Survivability Handbook Series.'' ''Volume 4: Survivability Engineering.'' Joint Technical Coordinating Group on Aircraft Survivability, Arlington, VA. | |||

US-Canada Power System Outage Task Force. 2004. Final Report on the August 14, 2003 Blackout in the United States and Canada: Causes and Recommendations. Washington-Ottawa. | US-Canada Power System Outage Task Force. 2004. Final Report on the August 14, 2003 Blackout in the United States and Canada: Causes and Recommendations. Washington-Ottawa. | ||

---- | ---- | ||

<center>[[System Reliability, Availability, and Maintainability|< Previous Article]] | [[Systems Engineering and Quality Attributes|Parent Article]] | [[ | <center>[[System Reliability, Availability, and Maintainability|< Previous Article]] | [[Systems Engineering and Quality Attributes|Parent Article]] | [[Resilience Modeling|Next Article >]]</center> | ||

<center>'''SEBoK v. 2. | <center>'''SEBoK v. 2.12, released 27 May 2025'''</center> | ||

[[Category: Part 6]] | [[Category: Part 6]] | ||

[[Category:Topic]] | [[Category:Topic]] | ||

[[Category:Systems Engineering and Quality Attributes]] | [[Category:Systems Engineering and Quality Attributes]] | ||

Latest revision as of 00:21, 24 May 2025

Lead Author: John Brtis, Contributing Authors: Ken Cureton, Scott Jackson, Ivan Taylor

Resilience is a relatively new term in the SE realm, appearing around 2006 and becoming popularized in 2010. The application of “resilience” to engineered systems has led to a proliferation of alternative definitions. While the details of definitions will continue to be debated, the information here should provide a working understanding of the meaning and implementation of resilience, sufficient for an engineer to address it effectively.

Overview

Definition

According to the Oxford English Dictionary, resilience is “the act of rebounding or springing back” (OED 1973). This definition fits materials that return to their original shape after deformation. For human-made or engineered systems, the definition of resilience can be extended to "maintaining capability in the face of an adversity."

Some practitioners limit the definition of resilience to only the system reactions following an encounter with an adversity in what is known as the "reactive perspective" regarding system resilience. The "proactive perspective" defines system resilience to include actions that occur before encountering adversity as well. The INCOSE Resilient Systems Working Group (RSWG) asserts that resilience should address both the reactive and proactive perspectives.

The RSWG defines resilience as "the ability to provide required capability when facing adversity", as depicted in Figure 1.

Scope of the Means

In applying this definition, one needs to consider the range of means by which resilience is achieved. The high-level means, and fundamental objectives of resilience include avoiding, withstanding, and recovering from adversity (Brtis and McEvilley 2019). “Withstanding” and “recovering” from adversity are classic aspects of resilience. For the purpose of engineered systems, “avoiding” adversity is also an important means of achieving resilience (Jackson and Ferris 2016). Finer granularity low-level means of achieving resilience are discussed in the taxonomy portion of this article.

Scope of the Adversity

An adversity is any condition that could degrade the delivered capability of a system. When discussing system resilience, the full spectrum of sources and types of adversity should be considered. For example, adversity may come from within the system or from the system’s environment, and can be expected or unexpected. Adversity may also come from opponents, friendlies or neutral parties, and if from human sources, can be malicious or accidental (Brtis & McEvilley 2020).

“Stress” and “strain” are two useful concepts for understanding adversities. Adversities may affect the system through a causal chain of adversities. The proximate adversities that affect the system directly (rather than indirectly) are called “stresses” on the system. The effects on the system are referred to as “strains” on the system.

It is important to recognize that risks that can impact a system in the future can cause a detrimental strain on the system in the present. Systems should be designed to be resilient to future risks, just as they are to current issues.

Taxonomy for Achieving Resilience

A taxonomy describing the fundamental objectives of resilience and the means for achieving those objectives is valuable to the engineer developing a resilient design. It is important to distinguish “fundamental objectives” from “means objectives” and their impact on trades and engineering decision-making (Clemen & Reilly 2001; Keeney 1992).

A three-layer objectives-based taxonomy that implements this distinction is provided below. The first level addresses the fundamental objectives of resilience, the second level addresses the means objectives of resilience, and the third level addresses architecture, design, and operational techniques for achieving resilience. The three layers are related by many-to-many relationships. The terms and their descriptions are, in many cases, tailored to best address the context of resilience. (Most of the taxonomy content came from Brtis (2016), Jackson and Ferris (2013), Winstead (2020), with other content developed over time by the RSWG.)

Taxonomy Layer 1: The Fundamental Objectives of Resilience

Fundamental objectives are the first level decomposition of resilience objectives. They establish the scope of resilience. They identify the values pursued by resilience. They represent an extension of the definition of resilience. They are ends in themselves rather than just means to other ends. They should be relatively immutable. Being resilient means achieving three fundamental objectives:

- Avoid adversity: eliminate or reduce exposure to stress

- Withstand adversity: resist capability degradation when stressed

- Recover from adversity: replenish lost capability after degradation

These fundamental objectives can be achieved by pursuing means objectives. Means objectives are not ends in themselves. Their value resides in helping to achieve the three fundamental objectives.

Taxonomy Layer 2: Means Objectives

Means objectives are not ends in themselves but enable achieving the fundamental objectives in Layer 1. This level tends to reflect the stakeholder perspective. The means objectives are high-level functional requirements that should be considered during the stakeholder needs and requirements definition process. The definitions and descriptions are specific to how each concept is applied for resilience.

- adapt: be or become able to deliver required capability in changing situations. This allows systems to function in changing conditions. Adaptability address known-unknowns, and potentially, unknown-unknowns. It may be real-time, near-term, or long-term. The system may adapt itself or be adapted by external actors. The driving situation changes may be in the adversities, environment, system state, required capability, mission, business, stakeholders, stakeholder needs and requirements, system requirements, system architecture, or design. Adaptability is related to flexibility, agility, and evolution, and serves as a complement to the “preserve integrity” objective. (See the entry for "evolve" below as well.) Examples of adaptability include adding a crumple zone to an automobile, streaming services shifting to different modalities when faced by unexpectedly high loads or anomalies, self-healing systems, etc.

- anticipate: (1) consider and understand potential adversities, their consequences, and appropriate responses -- before the system is stressed; and/or (2) develop and maintain courses of design and operation that address predicted adversity. Anticipation facilitates the mitigation of risks before they manifest. The "anticipate" objective is similar to the “prepare” objective. Examples include use of prognostic data, predictive maintenance, hurricane early warning systems, and emergency response planning.

- constrain: withstand stress by limiting damage propagation within the system. Examples include fault containment, circuit breakers, fire walls, multiple independent levels of security, vaccines, amputation, etc.

- continue: withstand and recover from stress to deliver required capability, while and after being stressed. Examples include fail-safe, backup capability, redundant capability, fault tolerance, rapid recovery, etc.

- degrade gracefully: withstand stress by transitioning to desirable system state(s) after degradation. This allows partial useful functionality instead of complete system failure. Examples include automobile crumple zone absorption of kinetic energy, deorbiting a satellite, safety system engagement, and failing over to backup systems.

- disaggregate: disperse functions, systems, or system elements. This eliminates a single point of failure and eliminates a single target for opponents. Examples include processor load distribution, mechanical/electrical/thermal load distribution, networks, the nuclear triad, etc.

- evade: avoid adversity through design or action. Examples include stealth and maneuver.

- evolve: adapt over time. Examples include: B-52 transition to multiple roles, upgrading software-defined radio capabilities, and adoption of fuel injection to address fuel economy needs.

- fortify: strengthen the system to withstand stress. Examples include shielding, strengthening weakest “links,” and protective aircraft cockpits.

- manage complexity: reduce adversity or its effects by eliminating unnecessary complexity and the resulting unintended consequences. Unintended consequences can be or can create an adversity, or they may cause an inappropriate response to adversity. Examples include the principle of “Keep it Simple Stupid” and suppressing undesirable emergent behavior.

- minimize adversity: avoid adversity by reducing the amount or effectiveness of adversities. Examples include battlefield preparation, and fighting an infection (biological).

- minimize faults: avoid adversity by reducing the likelihood and severity of adversities arising within the system, such as by using system elements with a long mean time to failure.

- monitor: observe and evaluate changes or developments that could lead to degradation. This allows projection and anticipation of future status, to allow early detection and early response. An example would be smart grid real-time power monitoring.

- preserve integrity: withstand adversity by remaining complete and unaltered. This is a compliment to “adapt/evolve.” Examples include blockchain, which provides tamper-proof data logging and cryptographic digital signatures.

- prevent: avoid degradation by precluding the realization of strain on the system. Examples include backup power to serve critical system capability, anti-corrosion coatings, etc.

- reduce vulnerability: better withstand adversity by identifying system vulnerabilities and modifying the system to reduce the degradation caused by adversity. Examples include performing and acting on Failure Modes and Effects Analysis (FMEA), reducing the attack surface, hardening vulnerable components, and flood-resistant urban infrastructures.

- repair: recover by fixing damage.

- replace: recover by substituting a capable element for a degraded one.

- tolerate: withstand degraded capability. Examples include excess margin, RAID storage, an aircraft hydraulic redundancy.

- understand: develop and maintain useful representations of required system capabilities, how those capabilities are generated, the system environment, and the potential for degradation due to adversity. Examples include digital twins, and battlefield simulations.

Taxonomy Layer 3: Architecture, Design, and Operational Techniques to Achieve Resilience Objectives

Architecture, design, and operational techniques that may achieve resilience objectives are listed below. This level tends to represent the system viewpoint, and should be considered during the system requirements, system architecture, design definition, and operation processes. The definitions and descriptions are specific to how each concept is applied for resilience.

- absorption: withstanding adversity by assimilating stress without unacceptable degradation of the system capability. Examples include dissipating kinetic energy with automobile crumple zones.

- adaptability: see “adapt” in layer 2 above. Adaptability has commonality with flexibility, agility, improvising, overcoming.

- anomaly detection: discovering salient abnormalities in the system or its environment to enable effective response.

- backward recovery: recovery to a previous stable state of a system.

- boundary enforcement: implementing the process, temporal, and spatial limits to protect the system.

- buffering: reducing the effect of degradation through excess capacity.

- coordinated defense: having multiple, synergistic mechanisms to protect required capability, such as via the defense-in-depth strategy.

- deception: confusing and thus impeding an adversary.

- defense-in-depth: minimizing loss by employing multiple coordinated mechanisms, each of which can at least partially achieve resilience.

- detection avoidance: reducing an adversary's awareness of the system. Examples include stealth.

- distributed privilege: requiring multiple authorized entities to act in a coordinated manner before a system function can proceed. Examples include authorization for use of nuclear weapons.

- distribution: spreading the system’s ability to perform – physically or virtually.

- diversification: use of heterogeneous design techniques to minimize common vulnerabilities and common mode failures. Examples include heterogeneous technologies, data sources, processing locations, equipment locations, supply chains, communications paths.

- domain separation: physically or logically isolating items with different protection needs.

- drift correction: monitoring the system’s movement toward the boundaries of proper operation and taking corrective action.

- dynamic positioning: relocation of system functionality or components to confound opponent understanding of the system.

- effect tolerance: providing required capability despite damage to the system.

- error recovery: detection, control, and correction of internal errors.

- fail soft: prioritizing and gradually terminating affected functions when failure is imminent.

- fault tolerance: providing required capability in spite of faults.

- forward recovery: recovery by restoring the system to a new, not previously occupied state in which it can perform required functions.

- human participation: including people as part of the system. A human in the loop brings a unique capability for agile and adaptable thinking and action.

- least functionality: system elements accomplish their required functions, but no more.

- least persistence: system elements are available, accessible, and fulfill their design intent only while needed.

- least privilege: system elements are allocated authorizations necessary to accomplish their specified functions, but not more.

- least sharing: system resources are accessible by multiple system elements only when necessary, and to as few system elements as possible.

- loose coupling: minimizing the interdependency of elements. This can reduce the potential for propagation of damage.

- loss margins: excess capability, so partial capability degradation is acceptable.

- maintainability: ability to be retained or restored to perform as required.

- mediated access: controlling the ability to access and use system elements.

- modeling and analytic monitoring: developing a representation of the system; and gathering, and analyzing data based on that understanding, to identify vulnerabilities, find indications of potential or actual adverse conditions, identify potential or actual system degradation and evaluate the efficacy of system countermeasures.

- modularity: composing a system of discrete elements so that a change to one component has a minimal impact on other elements.

- neutral state: providing a condition of the system where stakeholders (especially operators) can safely take no action while awaiting determination of the most appropriate action.

- protection: mitigation of stress to the system.

- protective defaults: providing default configurations of the system that provide protection.

- protective failure: ensuring that failure of a system element does not result in an unacceptable loss of capability.

- protective recovery: ensuring that recovery of a system element does not result in unacceptable loss of capability.

- realignment: reconfigure the system architecture to improve the system’s resilience.

- rearchitect/redesign: Modify the system elements or system structure for improved resilience.

- redeploy: reorganize resources to provide required capabilities and address adversity or degradation. Examples include rapid launch to replace satellites lost from a constellation, emergency vehicle repositioning during disasters.

- redundancy: having more than one means for performing the required function. This can mitigate single point failures. Examples include: multiple pumps in parallel.

- redundancy (functional): achieving redundancy by heterogeneous means. This can mitigate common mode failures and common case failures. This is also called, “homogeneous redundancy.”

- redundancy (physical): achieving redundancy by more than one identical element.

- repairability: the ease with which a system can be restored to an acceptable condition.

- replacement: changing system elements to regain capability.

- safe state: providing the ability to transition to a state that does not lead to unacceptable loss of capability.

- segmentation: separation (logically or physically) of elements to limit the spread of damage.

- shielding: interposition of items (physical or virtual) that inhibit the adversity’s ability to stress the system.

- substantiated integrity: ability to ensure and prove that system elements have not been corrupted.

- substitution: using new system elements to provide or restore capability.

- unpredictability: making changes randomly that confound an opponent’s understanding of the system.

- virtualization: creating a simulated, rather than actual, version of something. This facilitates stealth, dynamic positioning, and unpredictability. Examples include virtual computer hardware platforms, storage devices, and network resources.

The means objectives and architectural and design techniques will evolve as the resilience engineering discipline matures.

The Resilience Process

Resilience should be considered throughout a system's life cycle, but most especially in early life cycle activities that lead to resilience requirements. Once resilience requirements are established, they can and should be managed along with all the other requirements in the trade space. Specific considerations for inclusion in early life cycle activities can include the following items listed below (Brtis and McEvilley 2019).

- Business or Mission Analysis Process

- Defining the problem space should include identification of adversities and expectations for performance under those adversities.

- ConOps, OpsCon, and solution classes should consider the ability to avoid, withstand, and recover from adversities.

- Evaluation of alternative solution classes must consider ability to deliver required capabilities under adversity.

- Stakeholder Needs and Requirements Definition Process

- The stakeholder set should include people who understand potential adversities and stakeholder resilience needs.

- When identifying stakeholder needs, identify expectations for capability under adverse conditions and degraded/alternate, but useful, modes of operation.

- Operational concept scenarios should include resilience scenarios.

- Transforming stakeholder needs into stakeholder requirements includes stakeholder resilience requirements.

- Analysis of stakeholder requirements includes resilience scenarios in the adverse operational environment.

- System Requirements Definition Process

- Resilience should be considered in the identification of requirements.

- Achieving resilience and other adversity-driven considerations should be addressed in concert.

- Architecture Definition Process

- Selected viewpoints should support the representation of resilience.

- Resilience requirements can significantly limit and guide the range of acceptable architectures. Resilience requirements must be mature when used for architecture selection.

- Individuals developing candidate architectures should be familiar with architectural techniques for achieving resilience.

- Achieving resilience and other adversity-driven considerations should be addressed in concert.

- Design Definition Process

- Individuals developing candidate designs should be familiar with design techniques for achieving resilience.

- Achieving resilience and the other adversity-driven considerations should be addressed in concert.

- Risk Management Process

- Risk management should be planned to handle risks, issues, and opportunities identified by resilience activities.

Resilience Metrics

Commonly used resilience metrics include the following (Uday and Marais 2015):

- Time duration of failure

- Time duration of recovery

- Ratio of performance recovery to performance loss

- The speed of recovery

- Performance before and after the disruption and recovery actions

- System importance measures

However, resilience principles that are implied or substantiated by recommendations by domain experts are often missing in practice (Jackson and Ferris 2013). This has led to the development of a new metric for evaluating various systems in domains (aviation, fire protection, rail, and power distribution) to address resilience principles that are often omitted (Jackson 2016).

Numerous candidate resilience metrics have also been identified, including the following (Brtis 2016):

- Maximum outage period

- Maximum brownout period

- Maximum outage depth

- Expected value of capability: the probability-weighted average of capability delivered

- Threat resiliency; i.e. the time integrated ratio of the capability provided divided by the minimum needed capability

- Expected availability of required capability; i.e. the likelihood that for a given adverse environment the required capability level will be available

- Resilience levels; i.e. the ability to provide required capability in a hierarchy of increasingly difficult adversity

- Cost to the opponent

- Cost-benefit to the opponent

- Resource resiliency; i.e. the degradation of capability that occurs as successive contributing assets are lost

Multiple metrics may be required depending on the situation. If one has to select a single most effective metric for reflecting the meaning of resilience, consider "the expected availability of the required capability", where the probability-weighted availability is summed across the scenarios under consideration (Brtis 2016).

Resilience Requirements

Resilience requirements often take the form of resilience scenarios, which can often be found in ConOps or OpsCons.

The following information is often part of a resilience requirement (Brtis & McEvilley 2019):

- operational concept name

- system or system portion of interest

- capability(s) of interest their metric(s) and units

- target value(s); i.e., the required amount of capabilities

- system modes of operation during the scenario, e.g., operational, training, exercise, maintenance, and update

- system states expected during the scenario

- adversity(s) being considered, their source, and type

- potential stresses on the system, their metrics, units, and values (Adversities may affect the system directly or indirectly. Stresses are adversities that directly affect the system.)

- resilience related scenario constraints, e.g., cost, schedule, policies, and regulations

- timeframe and sub-timeframes of interest

- resilience metric, units, determination methods, and resilience metric target

- impact on other systems if the system of interest fails its resilience goals

Importantly, many of these parameters may vary over the timeframe of the scenario (see Figure 2). A single resilience scenario may involve multiple adversities, which may be involved at multiple times during the scenario.